Genie Portal - Global Market App

Company:Unilever (global consumer goods operating across multiple markets with global technology functions).

Role:Lead UX Researcher

Project duration:8 months

Design team size:Solo UX Researcher

Skills:Stakeholder interviews, service blueprinting and journey mapping

Output & impact:High-fidelity prototype ready for development

Summary/Context

The product owner commissioned a one-month engagement to build the Digital Genie Portal: a platform consolidating digital marketing resources for Unilever's marketing teams. The vision was clearly formed but had no research behind it.

I challenged the brief and negotiated a two-week discovery sprint before any design began. This revealed that the proposed concept did not reflect actual user needs — and that the real problem was fundamentally different, requiring a scope reframe before a single screen was designed.

Project phases

Research questions

Research goals

Challenges

Approach

Deliverables & impact

Research questions

- Who are the real end users, and what are their distinct roles and goals?

- What is the current end-to-end process for requesting and assessing digital marketing technologies, and where does it break down?

- Do marketers and technology teams frame digital requests differently?

- Does the original portal concept address users' primary pain points?

- What is the most critical stage to address first?

Research goals

- Map all users across global brand teams and global technology and marketing functions.

- Document the as-is process, including participants, handoffs, tools, and pain points.

- Determine whether marketing and technology users have different needs or just different vocabularies.

- Validate or challenge the original concept against real user needs and business constraints.

- Identify where friction is greatest and recommend a phased delivery approach.

Challenges

Challenge: Tight one-month timeline with a fully-formed product vision, leaving little room for research. Adaptation: Framed discovery as risk reduction, not delay and successfully negotiated a two-week sprint.

Challenge: Stakeholders could not articulate who the users were or what they needed. Adaptation: Surfaced the user ecosystem through interviews and process walkthroughs, identifying participants not in the original brief.

Challenge: The assessment process involved up to ten participants, spanned a month, and ran entirely across Excel spreadsheets. Adaptation: Produced a combined journey map and service blueprint used in workshops to collectively prioritise scope rather than attempting a full redesign at once.

Challenge: Marketers and technologists described the same process in entirely different ways. Adaptation: Recommended a dual-entry architecture, two starting points converging into one shared workflow rather than two separate tools.

Approach

Stakeholder Interviews (8–10 participants · Global and market-level · Discovery phase)

Semi-structured interviews across global technology and marketing functions and market-level brand teams. Several participants emerged through snowball referrals, not the original brief. Key findings: Two distinct user groups with different entry points to the same process. Both experienced significant delays at the assessment stage, which was entirely manual.

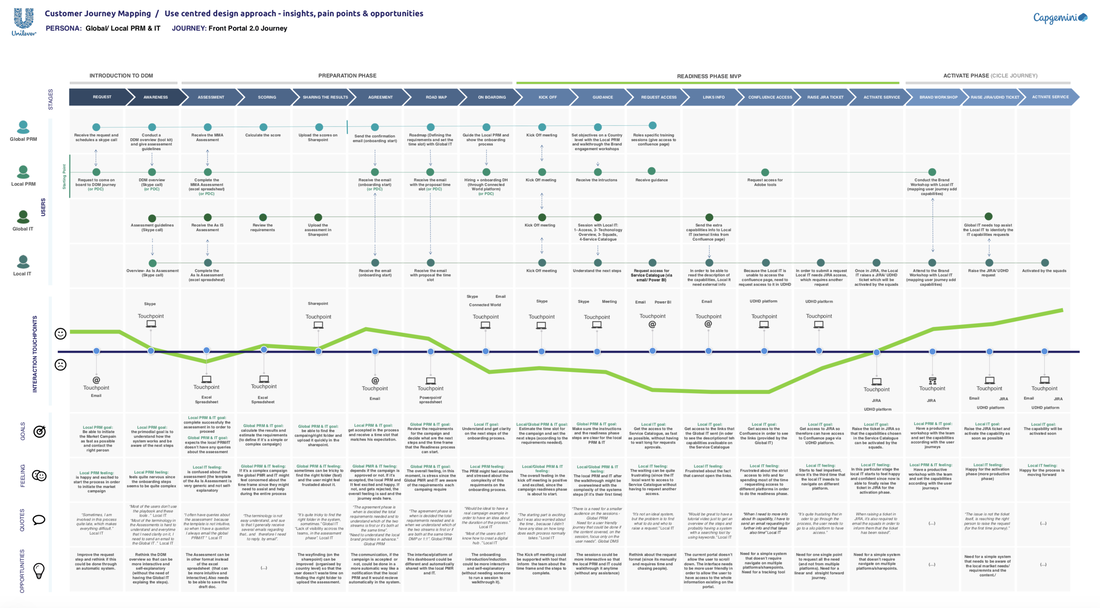

User Journey Map & Service Blueprint (Co-created with stakeholders · As-is & to-be · Discovery phase)

Mapped the full lifecycle from campaign ideation through request, assessment, approval, and recommendation. Used in workshops to facilitate stakeholder prioritisation. Key findings: The assessment stage was unanimously the highest-pain touchpoint — multi-participant, month-long, and untracked. Agreed as the MVP priority.

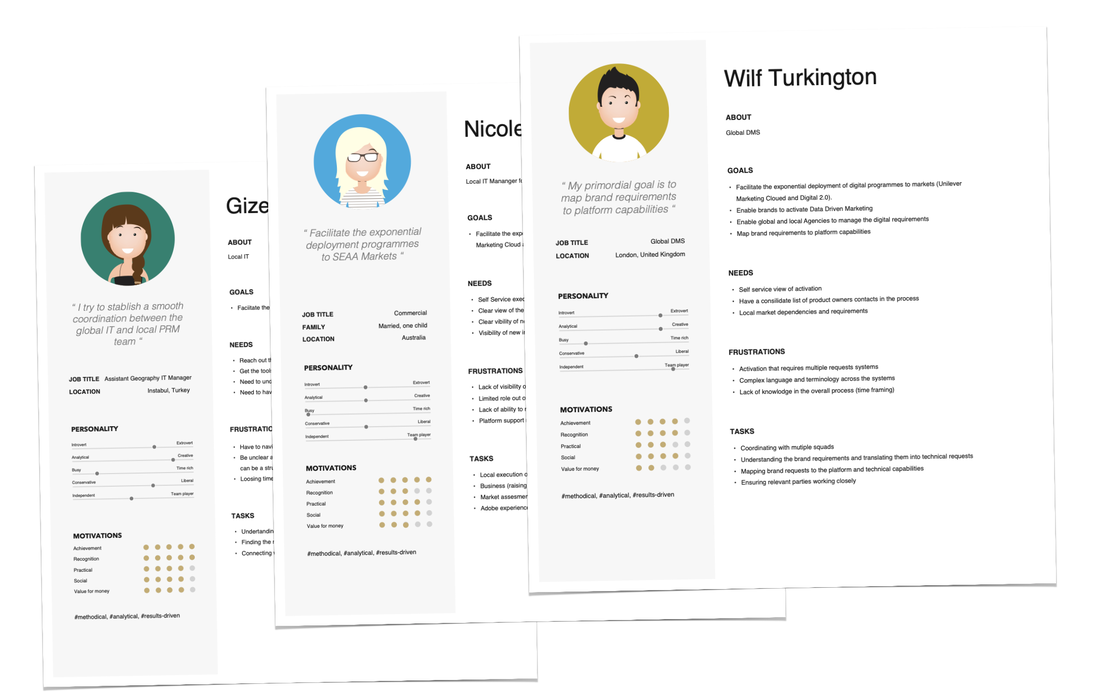

User Personas (3 personas · Research-validated · Discovery phase)

Three personas from interview synthesis: a market-level brand marketer, a global technology manager, and a global marketing strategist. Key findings: Marketers used business-case language; technologists used feature-level language. Both described the same need — confirming the dual-entry model.

Iterative Prototyping & Usability Testing (Multiple sprint iterations · Task-based testing · Design phase)

Low- and mid-fidelity prototypes of the assessment module tested with both user groups, focusing on entry points, form clarity, and the draft-save and collaboration features. Key findings: Dual-entry distinction felt intuitive by role. Draft-save was described as essential. Terminology needed refinement across rounds.

Content & Terminology Co-design Sessions (Global team SMEs · Collaborative workshops · Design phase)

Sessions with global technology SMEs to agree on standardised terminology throughout the assessment module, addressing a root cause of confusion in the existing process. Key findings: Ambiguous and market-specific terms replaced with a shared glossary, reducing cognitive load and improving task completion in subsequent testing.

Critical insights

RQ1 — Who are the real users? Far broader than scoped: market-level brand teams plus two distinct global functions — technology and marketing — each with different roles within the same process.

RQ2 — Where does the process break down? The assessment stage: manual, Excel-dependent, up to ten participants, no shared tracking, and routinely a month long.

RQ3 — Do the two groups frame requests differently? Yes, but the underlying need is identical. Different entry points, not different destinations, were required.

RQ4 — Did the original concept address user needs? No. It focused on consolidating resources; the real need was a structured digital mechanism for submitting requests and completing collaborative assessments.

RQ5 — What to address first? The assessment stage — highest impact-to-effort ratio, agreed with stakeholders as the MVP priority.

Deliverables

- Discovery report with scope recommendation

- Three validated user personas

- As-is and to-be journey map / service blueprint

- Dual-entry platform architecture

- Iterated assessment module prototype

- Usability testing reports (multiple rounds)

- Standardised terminology glossary

- Agile delivery roadmap

Impact

- Scope redefined before any design investment, preventing costly misalignment.

- Month-long Excel assessment replaced by a collaborative digital workflow.

- Both user groups served within a single platform.

- Agile delivery model adopted for incremental, validated releases.

- Cross-functional alignment achieved through co-design and shared artefacts.